Comparing AWS, Azure, and GCP for Startups in 2026

By Maddy Osman

Senior Content Marketing Manager at DigitalOcean

- Updated:

- 18 min read

Choosing a cloud provider is one of the most consequential infrastructure decisions a business can make—and three hyperscalers dominate the field. According to 2025 research from Statista, Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP) currently account for a combined 62% share of the cloud infrastructure market. All three offer hundreds of services across computing, storage, networking, and more. So, where do they actually diverge?

While cloud providers may seem somewhat interchangeable, in actuality, these three hyperscalers each have unique strengths and reported challenges that can impact your cloud costs and developer experience. For example, a company deep in the Microsoft ecosystem might lean toward Azure, while one building around AI and big data processing might favor GCP. Read on for a comparison of AWS, Azure, and GCP, and why you might want to consider other cloud providers, including specialized platforms like DigitalOcean.

Key takeaways:

-

AWS, Azure, and GCP dominate the cloud market with a combined 62% share, each offering specialized ecosystems ranging from AWS’s massive scale to Azure’s enterprise Microsoft integration to GCP’s data analytics leadership.

-

To choose the right cloud provider, compare their strengths across compute, serverless, and AI, but also consider practical factors: where their data centers are located and how well they support your industry’s requirements.

-

The major hyperscalers differ in their strategic focus: AWS provides the most granular infrastructure control and AI model flexibility, Azure offers deep integration with the Microsoft ecosystem, and GCP excels in big data analytics and container-native networking.

-

DigitalOcean provides a streamlined alternative to hyperscalers by offering an integrated agentic inference cloud architecture and transparent pricing that can reduce the total cost of ownership for AI-native startups and digital-native enterprises.

Experience the power of AI and machine learning with DigitalOcean Gradient™ AI GPU Droplets. Leverage NVIDIA H100, H200, RTX 6000 Ada, L40S, and AMD MI300X GPUs to accelerate your AI/ML workloads, deep learning projects, and high-performance computing tasks with simple, flexible, and cost-effective cloud solutions.

Sign up today to access DigitalOcean GradientAI GPU Droplets and scale your AI projects on demand without breaking the bank.

Overview of top hyperscaler cloud providers: AWS, Azure, and GCP

AWS, Azure, and GCP are the largest cloud providers in the market, each operating with slight nuances that cater to different use cases.

Let’s dive into each, including a managed cloud services comparison:

*This “best for” information reflects an opinion based solely on publicly available third-party commentary and user experiences shared in public forums. It does not constitute verified facts, comprehensive data, or a definitive assessment of the service.

Pricing and feature information in this article are based on publicly available documentation as of January 2026 and may vary by region and workload. For the most current pricing and availability, please refer to each provider’s official documentation.

| Solution | Best for* (use cases) | Key features | Pricing |

|---|---|---|---|

| DigitalOcean | Digital-native enterprises, AI-native businesses, and developers seeking simplicity | Integrated agentic cloud with Gradient AI, simplified UI/CLI, and high-performance H100/H200 GPU Droplets | Predictable flat pricing; generous included bandwidth; $0.01/GB egress overages; up to 80% lower AI TCO |

| Amazon Web Services (AWS) | Enterprises needing diverse, global cloud services and granular control | Massive catalog of 200+ services, custom Trainium/Inferentia AI silicon, and Amazon Bedrock for foundation models | Pay-as-you-go; egress fees typically $0.01–$0.02/GB; discounts for 1–3 year commitments |

| Microsoft Azure | Businesses integrated with the Microsoft ecosystem and hybrid environments | Exclusive OpenAI partnership (GPT-4o), deep integration with Microsoft 365, and Azure Arc for hybrid management | Pay-as-you-go; Azure Hybrid Benefit for existing licenses; egress fees based on volume/region |

| Google Cloud Platform (GCP) | Businesses focused on AI, big data processing, and multi-cloud | Leadership in Kubernetes, BigQuery for analytics, and Vertex AI with native Gemini multimodal models | Pay-as-you-go with custom machine types; Committed Use Contracts; global subsea fiber network |

AI workloads don’t require hyperscaler sprawl. Specialized GPU clouds are giving teams new ways to train and deploy models faster, with fewer trade-offs. See where DigitalOcean fits among modern cloud GPU providers for AI/ML.

What is AWS (Amazon Web Services)?

Launched in 2006, AWS remains the global market leader with a 28% market share as of Q4 2025. AWS provides the most mature and extensive ecosystem of more than 200 services. AWS has solidified its position in the AI infrastructure market through Amazon Bedrock, a fully managed service for scaling foundation models, and Amazon Q, its AI-powered assistant for developers. AWS is preferred by organizations requiring massive scale and the most granular control over their infrastructure.

Key offerings include:

-

Compute (EC2): Beyond standard virtual machines, AWS now offers specialized instances powered by their own custom silicon, such as Trainium and Inferentia, designed specifically to lower the cost of training and running AI models.

-

Serverless: While AWS Lambda remains an industry standard for event-driven code, AWS has expanded its serverless portfolio with Amazon Fargate, which allows you to run serverless containers without managing any underlying EC2 instances.

-

AI/ML platforms: Amazon Bedrock is AWS’s flagship AI offering, a fully managed serverless service that enables developers to integrate foundation models (from AI21, Anthropic, Cohere, and Meta) into their apps via an API.

What is Azure (Microsoft Azure)?

Launched in 2010, Azure is the second-largest provider, holding a 20% market share as of late 2025. Azure’s growth has been accelerated by its exclusive partnership with OpenAI, making it the primary home for GPT-4o and other cutting-edge models via Azure AI Studio. Azure excels at “AI-ifying” the enterprise, offering deep integration with Windows-based environments, Microsoft 365, and its widespread Copilot ecosystem.

Key offerings include:

-

Compute (Virtual Machines): Azure has leaned into high-performance computing (HPC). Their ND H100 v5-series VMs are specifically built for massive-scale AI workloads, offering integrated NVIDIA H100 Tensor Core GPUs and high-speed networking.

-

Serverless: In addition to Azure Functions, Microsoft has seen adoption of Azure Container Apps, a serverless platform built on Kubernetes for developers to deploy microservices and containerized apps without managing complexity.

-

AI/ML platforms: Through its exclusive partnership with OpenAI, Azure AI Studio and Azure OpenAI Service provide enterprise-grade access to GPT-4o and DALL-E 3, coupled with Azure’s security and compliance frameworks.

What is GCP (Google Cloud Platform)?

While the youngest of the three (started in 2011), GCP is the fastest-growing hyperscaler, capturing 13% of the market in Q3 2025. Historically known for its DevOps-friendly tools and Kubernetes leadership, GCP is now a primary contender in the AI race with its Gemini multimodal models and the Vertex AI platform. GCP is often the choice for data-heavy organizations and startups looking for high-performance networking and advanced data analytics.

Key offerings include:

-

Compute (Compute Engine): Google’s standout differentiator is its Cloud TPU (Tensor Processing Unit). The latest TPU v5p is one of the most powerful AI accelerators on the market, offering a cost-effective alternative to NVIDIA GPUs for training massive LLMs.

-

Serverless: While GPC still offers Google Cloud Functions, the platform has largely pivoted toward Cloud Run. This is a fully managed serverless environment that runs containers and automatically scales them, providing more flexibility than traditional functions.

-

AI/ML platforms: Vertex AI is Google’s unified data and AI platform. It provides access to Gemini, Google’s most capable multimodal model, allowing developers to build, deploy, and scale AI applications with “search-quality” data grounding.

Not all AI chatbots are built the same. From reasoning to integrations, ChatGPT and Gemini take different approaches. Find out which one actually delivers for your day-to-day work.

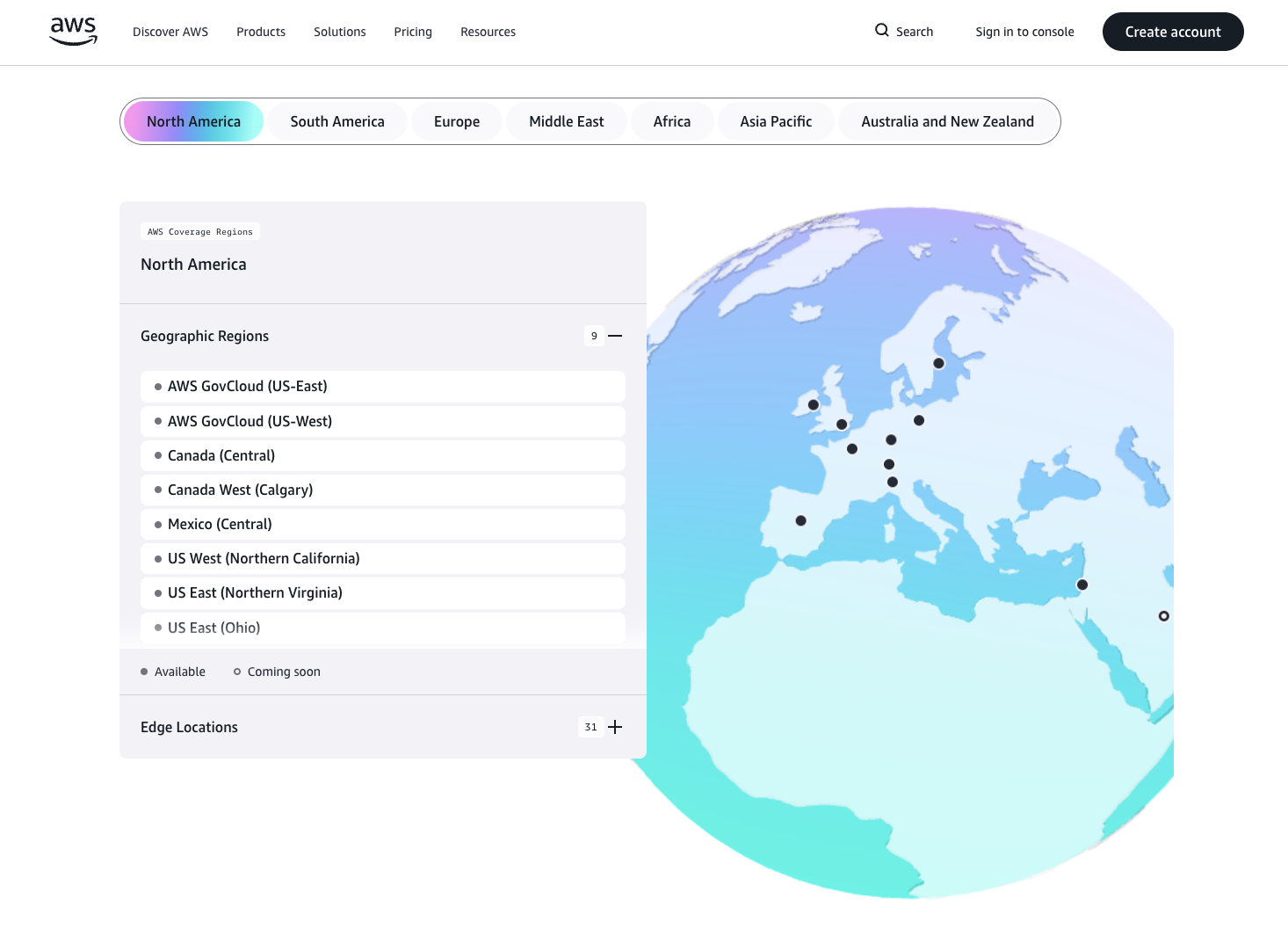

Data centers

For a business, choosing a cloud provider isn’t just about the service list—it’s about where that service lives. While the cloud may seem invisible, it is powered by a massive physical footprint. The geographical distribution of data centers directly dictates three critical business outcomes: latency, cost optimization, and business continuity. Server proximity reduces load times, regional pricing varies significantly, and distributed infrastructure keeps you online during outages.

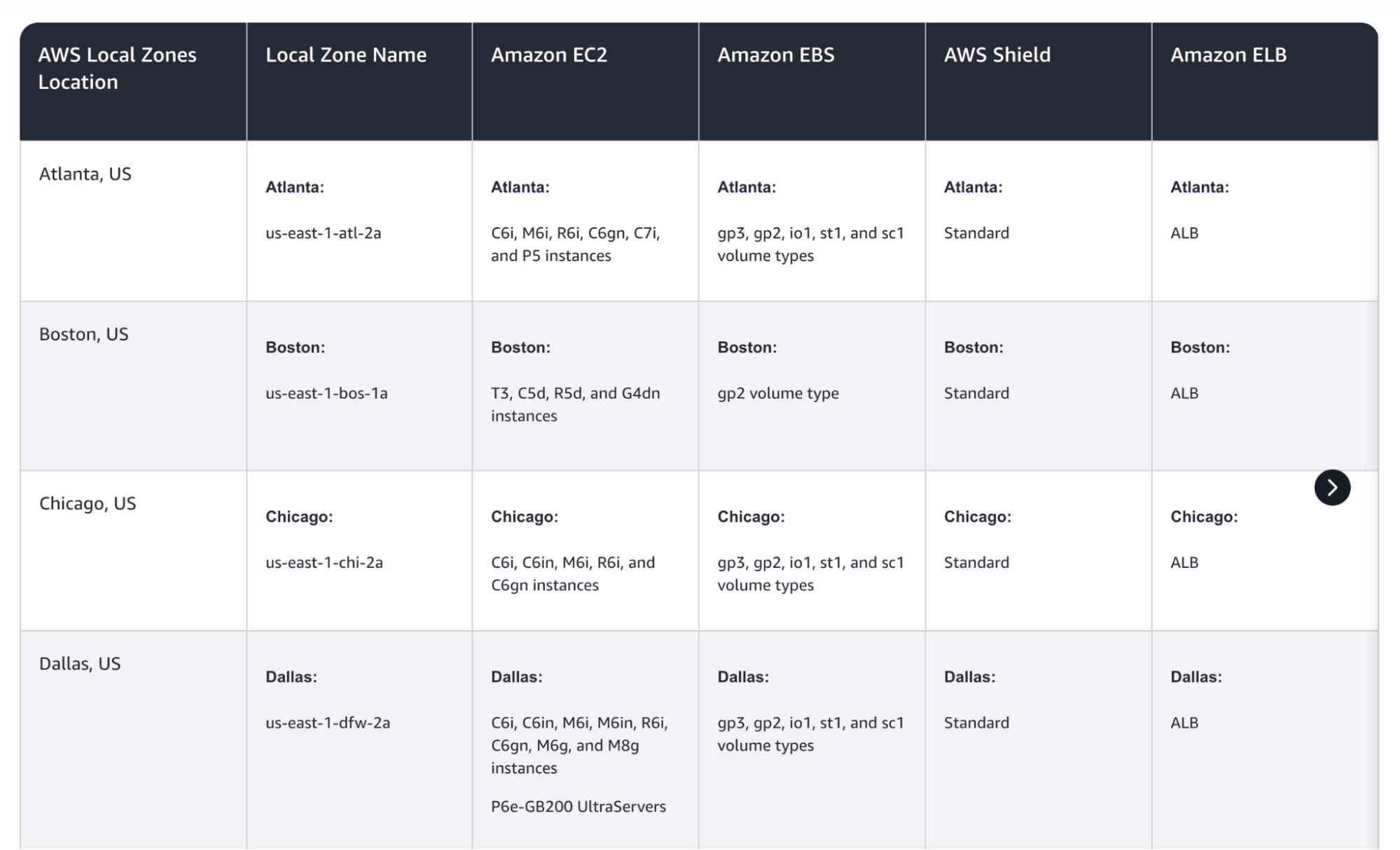

Amazon Web Services

The AWS Cloud encompasses 39 geographic regions and 123 Availability Zones (AZs). Each region ensures business continuity by using at least three isolated AZs—each with independent power and cooling—linked by ultra-low-latency fiber. To further reduce latency, AWS has more than 30 Local Zones and 5G-integrated Wavelength locations. This lets developers deliver sub-10-millisecond performance to end-users without requiring proximity to a full-scale region.

Microsoft Azure

Azure maintains the industry’s largest global footprint with over 70 regions across 35 countries. Its continuity strategy relies on “Region Pairs” spaced at least 300 miles apart to ensure data recovery during massive system failures. Beyond standard infrastructure, Azure offers specialized compliance through Azure Government and “Sovereign Clouds,” specifically engineered to meet strict 2026 data residency and privacy regulations in Europe and China.

Many teams exploring Azure alternatives aren’t looking for more features—they’re looking for less complexity. Azure’s enterprise-focused services and layered pricing can make it challenging to predict costs or move quickly

Google Cloud Platform

Google operates 49 regions and 148 zones as of early 2026, with a strategic focus on high-growth markets in Asia and Latin America. GCP’s primary differentiator is its private subsea fiber network, which routes traffic away from the public internet to provide more consistent global latency. Additionally, Google facilitates “carbon-aware” computing, providing real-time clean energy usage data to help businesses meet ESG reporting requirements for their cloud workloads.

Cost and pricing

Pricing is one of the most important elements to consider when choosing a cloud provider. Remember, you can be locked-in to the pricing of your chosen provider for several years, including as you scale your application or business. Each hyperscaler cloud platform adopts a pay-as-you-go model with varying instance types, storage costs, data transfer fees, and discounts.

Understanding these nuances will help you optimize your cloud spending. Here’s a helpful AWS vs Azure vs GCP cost comparison:

Hyperscalers can come with high costs and complex pricing structures—another area where specialized providers like DigitalOcean shine. Check out DigitalOcean’s pricing calculator to see for yourself.

Amazon Web Services

AWS operates on a consumption-based model, billing resources by the second or hour, which offers high flexibility but requires vigilant cloud monitoring to prevent AWS bill shock. Standard compute costs vary by region and instance family, while AI services like Amazon Bedrock utilize a distinct token-based model or provisioned throughput for high-volume inference. Networking includes 100 GB of free monthly egress, with overages starting at $0.01–0.02/GB; notably, AWS now offers a one-time egress fee waiver for customers permanently migrating off the platform.

To optimize long-term budgets, organizations can take advantage of Savings Plans for up to 72% discounts in exchange for one- or three-year commitments, a more flexible alternative to traditional Reserved Instances.

AWS costs are rarely obvious upfront—they emerge from complex pricing models, service dependencies, and usage-based fees that are hard to predict. For teams that only need core infrastructure, that complexity can quickly translate into bill shock.

Microsoft Azure

Azure follows a similar pay-as-you-go consumption model, often offering lower rates to enterprise customers through Microsoft Customer Agreements. AI billing has been standardized around token-based pricing for OpenAI and proprietary models, with provisioned throughput available for guaranteed performance. Like AWS, Azure provides a 100 GB monthly egress free tier and waives Azure egress fees for customers permanently migrating to other providers.

A key differentiator is the Azure Hybrid Benefit, which allows businesses to repurpose existing on-premises Windows and SQL Server licenses to reduce cloud virtual machine rates by up to 80%.

Google Cloud Platform

GCP distinguishes itself through customizable compute billing, allowing users to specify exact CPU and memory counts to prevent the over-provisioning common on other clouds. AI pricing for the Gemini family is primarily token-based, though Google also offers provisioned throughput for enterprise teams needing guaranteed capacity. GCP provides 100 GB of free monthly egress and uses a flexible, spend-based “Committed Use Discount” (CUD) model rather than rigid instance reservations, offering up to 70% savings. While basic billing support is free, technical support for production environments follows a tiered structure starting at a flat monthly fee plus a percentage of variable spend.

Pricing and feature information in this article are based on publicly available documentation as of January 2026 and may vary by region and workload. For the most current pricing and availability, please refer to each provider’s official documentation.

Developer experience and ease of use

A cloud provider’s interface, tooling, and service design philosophy directly shape how quickly your team can ship. Each hyperscaler takes a different approach—AWS prioritizes flexibility and breadth, Azure leans into Microsoft ecosystem integration, and GCP emphasizes containerization and data workflows. Understanding these philosophies helps you anticipate the learning curve and day-to-day experience your team will face.

Amazon Web Services

AWS provides flexibility, but its developer experience is often characterized by complexity and a steep learning curve. While it is a strong choice for businesses seeking a model-agnostic AI strategy—allowing developers to swap between models like Claude and Llama—navigating its ecosystem of over 200 services can lead to infrastructure sprawl.

Developers can face decision fatigue because the platform often offers multiple, overlapping ways to perform the same task—for example, AWS offers ECS, EKS, Fargate, and App Runner all for running containers, leaving teams to parse the differences before writing a single line of code. Furthermore, the platform’s unintuitive naming conventions and dense management console often require specialized expertise or dedicated cloud architects to manage effectively, making it a powerful but administratively heavy option for agile startups.

Microsoft Azure

Azure’s environment is built around the broader Microsoft software stack. It offers direct integration for teams using GitHub, Visual Studio, and Microsoft Entra ID, which can simplify identity management and deployment pipelines for Windows-centric organizations. For businesses with hybrid requirements, Azure Arc provides a toolset to manage on-premises and edge servers alongside cloud resources from a single interface. Additionally, Azure maintains specialized regions for government and healthcare sectors designed to address specific regulatory requirements for data residency and privacy.

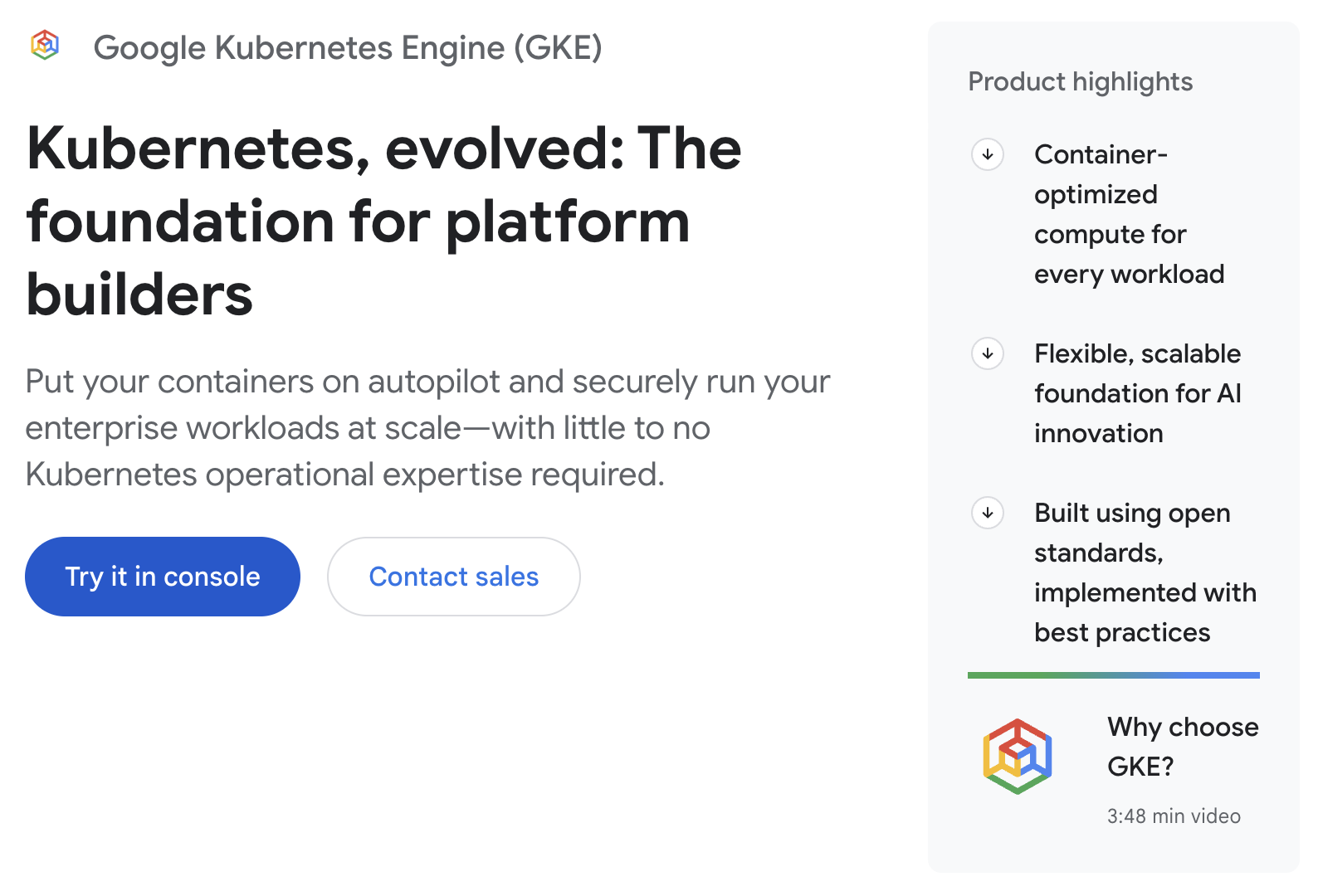

Google Cloud Platform

GCP emphasizes containerization and data-centric workflows. As the originator of Kubernetes, Google’s platform provides integrated container management via Google Kubernetes Engine (GKE), catering to teams that prioritize microservices and DevOps-led deployments. In the AI space, the Vertex AI platform serves as an environment for building applications with the Gemini model family, focusing on multimodal tasks involving text, image, and video data. These services are supported by Google’s private fiber-optic network, which is designed to provide stable data transfer speeds for global applications.

Performance

Performance depends on more than raw compute power—it’s shaped by network architecture, hardware availability, and how close your workloads run to your users. Each hyperscaler takes a different approach to optimizing latency, throughput, and specialized workloads like AI and high-performance computing.

Amazon Web Services

AWS performance is centered on its mature global footprint and hardware-level optimizations. The platform uses the Nitro System to offload virtualization functions to dedicated hardware, which maximizes CPU availability for user applications and provides consistent I/O performance. To address localized latency, AWS offers Local Zones and Wavelength, placing compute resources at the edge of 5G networks and in major metro areas. While its vast catalog of instance types allows for precise workload tuning, the complexity of navigating these options often requires significant architectural oversight to ensure optimal performance.

Microsoft Azure

Azure provides the highest regional density of the hyperscalers, allowing businesses to place data centers in closer physical proximity to specific localized user bases. Performance for hybrid environments is supported by ExpressRoute, which provides private, high-speed connections between on-premises infrastructure and the cloud to reduce latency for cloud-adjacent workloads. For high-performance computing and AI, Azure leverages InfiniBand networking and dedicated NVIDIA H100 clusters.

Google Cloud Platform

GCP’s performance differentiator is its private global fiber-optic network and subsea cable system, which minimizes reliance on the public internet. Its Premium Tier network is designed to ensure traffic enters and exits as close to the user as possible, resulting in fewer hops and more consistent global latency. A key technical advantage is Google’s Global VPC (Virtual Private Cloud) architecture, which allows resources in different regions to communicate over a private IP space without the performance overhead of complex peering or VPNs. For AI-specific workloads, GCP offers custom TPUs designed specifically to accelerate large-scale machine learning training and inference.

Customer support and documentation

When something breaks at 2 a.m., the quality and accessibility of your cloud provider’s support directly impact how quickly you’re back online. Consider how each provider structures its support tiers, what’s included at each price point, and whether documentation is designed to help you self-serve or sends you hunting across scattered resources.

AWS (Amazon Web Services)

AWS offers an expansive library of technical documentation, whitepapers, and community forums. Support is tiered into several plans, starting with a free Basic tier that is limited to account and billing inquiries. For technical troubleshooting, organizations must opt for paid tiers like Business Support+ or Enterprise, which use a percentage-based pricing model that scales up quickly with a user’s monthly cloud spend.

AWS support time commitments focus on “initial response times”—the time it takes for an engineer to acknowledge a ticket—rather than guaranteed resolution times. Additionally, high-level advocacy via a Technical Account Manager is reserved for the most expensive tiers, often leaving smaller teams to rely on general support associates for incident resolution.

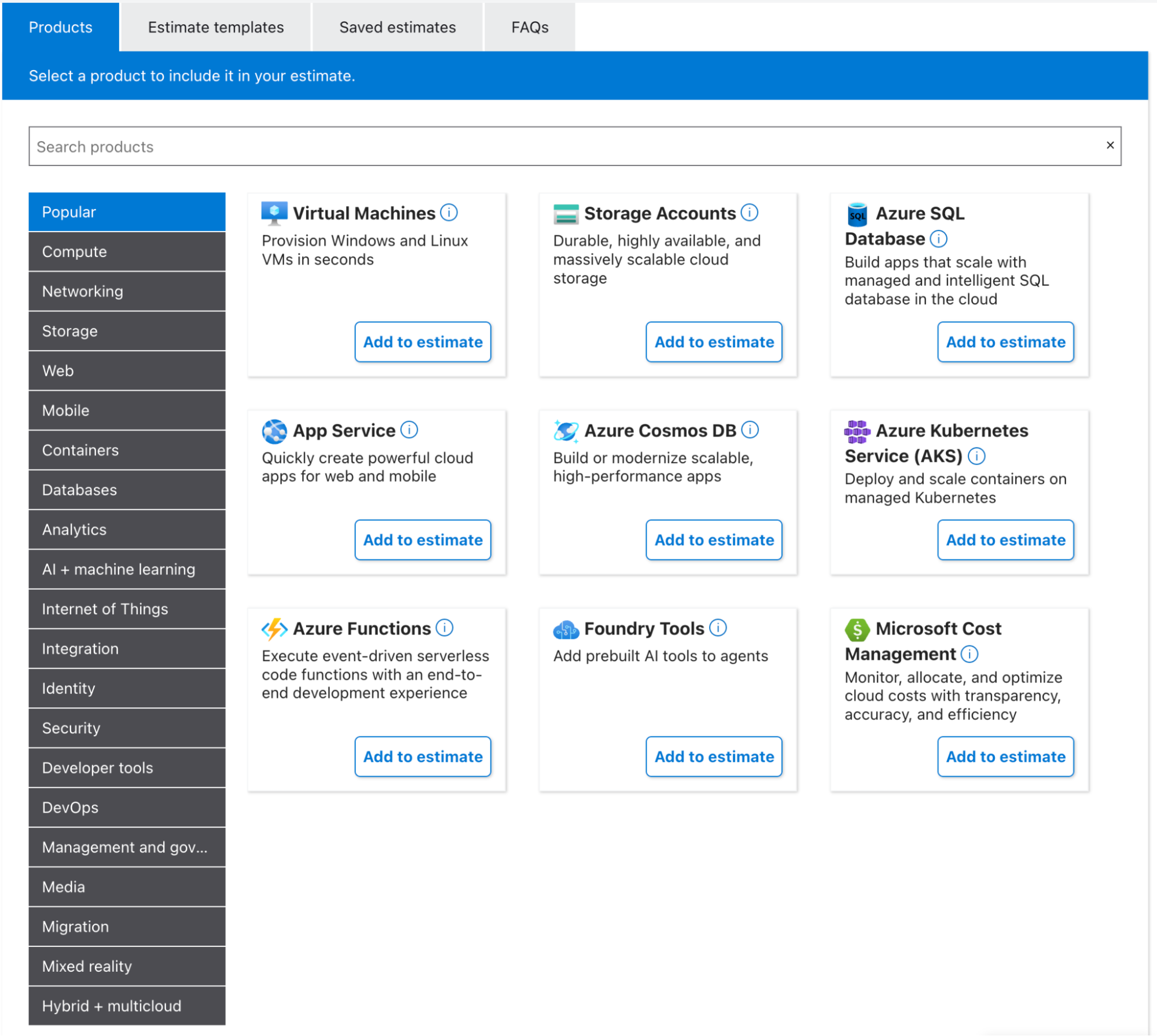

Azure (Microsoft Azure)

Microsoft Azure provides a tiered support structure ranging from its free Basic plan to high-level Unified Support agreements for large enterprises.

-

Technical assistance begins at a flat $29/month for the Developer tier, though this is restricted to non-production environments and business-hour responses.

-

Production-level support starts with the Standard tier at $100/month, providing 24/7 access and a one-hour response target for critical incidents.

-

Professional Direct tier costs $1,000/month and adds architectural guidance with proactive monitoring.

Azure’s documentation is extensive and covers the entire Microsoft stack—including Office 365 and Windows—offering a unified resource for teams already working within the broader ecosystem. Like other hyperscalers, Azure’s service level agreements prioritize initial response times over guaranteed resolution times, and the most robust support features remain reserved for the highest enterprise tiers.

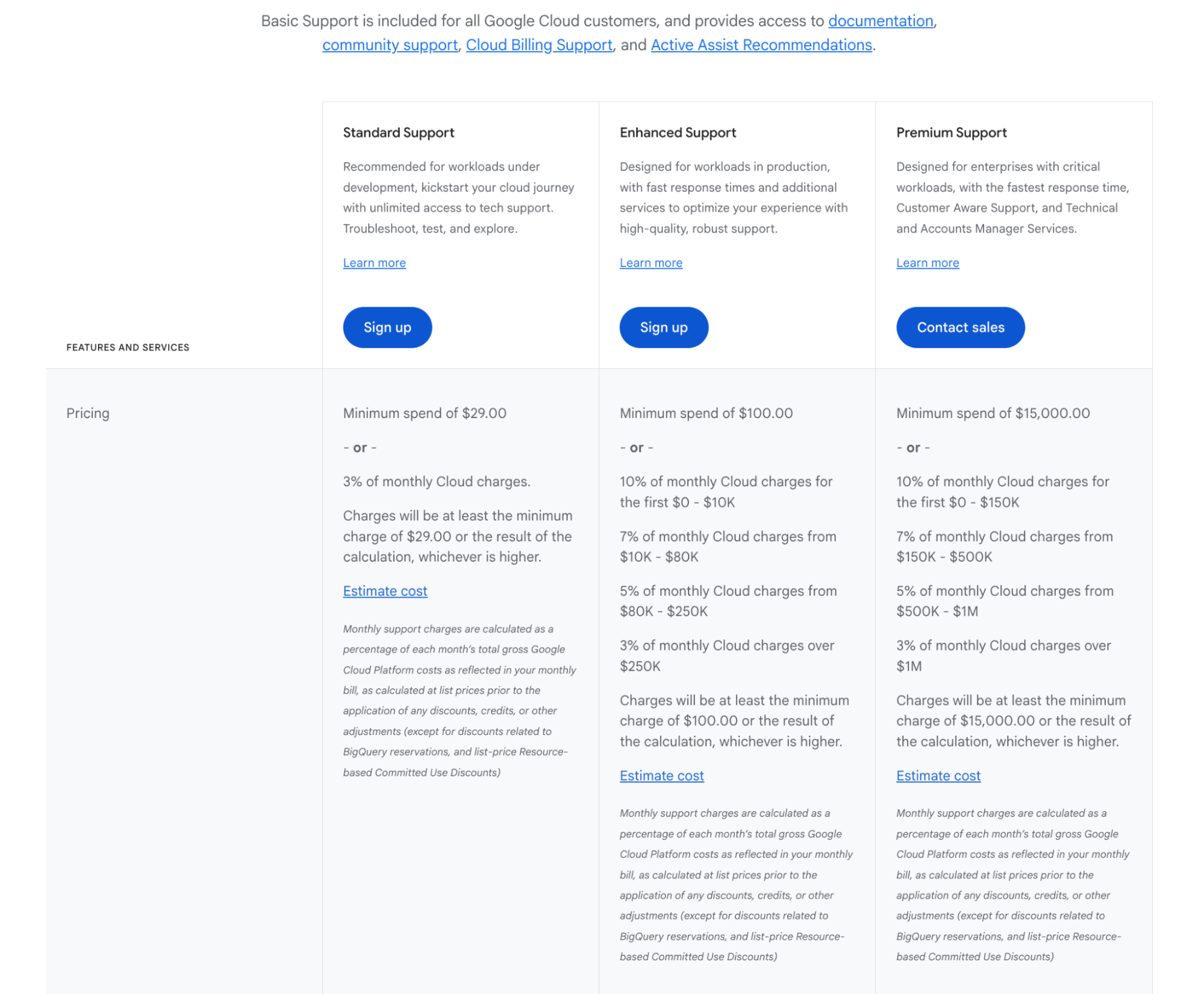

GCP (Google Cloud Platform)

GCP structures its support into four tiers: Basic, Standard, Enhanced, and Premium. The free Basic tier is limited to self-service documentation, community forums, and billing support, with technical assistance requiring a paid subscription. Pricing for Google Cloud support plans follows a percentage-based model—typically ranging from 3% to 10% of monthly cloud spend—which causes support costs to scale automatically as a business grows. While the higher tiers offer expedited response targets (ranging from one hour to 15 minutes for critical incidents), Google Cloud does not provide guaranteed resolution times within its SLAs.

Mission-critical features like dedicated Technical Account Managers and 24/7 support for lower-priority issues are restricted to the most expensive plans, which often require significant monthly minimum commitments.

AWS vs Azure vs GCP

While AWS, Azure, and GCP offer similar foundational capabilities, they have developed distinct operational profiles based on infrastructure scale, ecosystem integration, and data specialization. Choosing the right provider involves aligning these strategic strengths with your team’s existing technical skills and long-term budget requirements.

-

If you need granular control and AI model flexibility, consider AWS. AWS provides an extensive service catalog for organizations requiring highly customized configurations. It is specifically suited for teams pursuing a model-agnostic AI strategy, allowing developers to switch between various foundation models like Claude and Llama within a single environment.

-

If you need Microsoft ecosystem integration and hybrid management, consider Azure. Azure is the natural choice for organizations already utilizing Microsoft 365, Teams, and GitHub. It excels in hybrid environments, using Azure Arc to manage on-premises and edge servers through a unified cloud interface.

-

If you need advanced data analytics and container-first scaling, consider GCP. GCP is designed for data-heavy organizations that require real-time insights via tools like BigQuery. Its birthplace in Kubernetes makes it a leader in container management, supported by a private global fiber network that ensures consistent, low-latency data transfer.

Hyperscalers vs DigitalOcean

Founded as one of the top developer-friendly cloud platforms and an alternative to the big three, DigitalOcean is a comprehensive agentic cloud designed specifically for digital-native enterprises and AI-native businesses. By integrating a foundational general-purpose cloud with Gradient™ AI, DigitalOcean provides the infrastructure and workflows required to build full-stack AI applications and agents without the operational overhead and cost complexity often found in hyperscale environments.

Here are the reasons why organizations choose DigitalOcean as an alternative to Amazon Web Services, Google Cloud, and Microsoft Azure:

-

Integrated agentic cloud architecture: DigitalOcean provides a suite of approximately 30 core products, avoiding the complexity of the hundreds of specialized services found on AWS or Azure. This integrated architecture combines traditional infrastructure (compute, storage, and networking) with Gradient AI, a purpose-built platform for the agent development lifecycle. This allows developers to manage foundational cloud resources and AI agents within a single, unified ecosystem.

-

Developer-centric experience and support: At its core, the platform is built for approachability. It features an intuitive UI, a robust API, and a powerful CLI that simplify cloud management. DigitalOcean further supports developers with a library of over 8,000 technical tutorials and community-driven resources. Unlike the often-opaque support tiers of the big three, DigitalOcean provides free basic technical support to all users.

-

Simplified AI development: The Gradient AI Inference Cloud enables developers to test, deploy, and iterate on AI agents with minimal infrastructure management. It supports both proprietary and open-source foundation models (including OpenAI, Anthropic, and Llama), so developers can swap models seamlessly without changing underlying code or managing multiple API accounts and billing identities.

-

Global performance and reliable SLAs: DigitalOcean currently operates 15 globally distributed data centers, ensuring high-performance cloud services and low-latency connectivity for users worldwide. To support the needs of modern enterprises, DigitalOcean offers industry-leading reliability, including a 99.99% uptime SLA for Droplets and Block Storage, providing the business continuity required for production-level applications.

-

Transparent and predictable cloud pricing: DigitalOcean’s pricing is famously straightforward, making it easier for businesses to forecast budgets as they scale. It eliminates the opaque egress fees and inter-region transfer charges common when working with hyperscalers. Virtual machines come with generous included bandwidth, and overages are charged at a flat, transparent rate (typically $0.01/GB). For inference-heavy AI workloads, DigitalOcean can offer up to an 80% lower total cost of ownership compared to traditional hyperscalers.

Choosing the right platform requires a side-by-side look at performance, support, and total cost of ownership. Explore our in-depth comparison guides to see how DigitalOcean stacks up against the hyperscalers:

AWS, Azure, and GCP FAQ

Is DigitalOcean cheaper than AWS, Azure, or GCP? DigitalOcean is generally more budget-friendly than hyperscalers, offering up to an 80% lower total cost of ownership for inference-heavy AI workloads. Its pricing is straightforward with no opaque egress fees or inter-region transfer charges, making it easy to forecast budgets. Additionally, virtual machines come with generous included bandwidth, and overages are charged at a flat, transparent rate of typically $0.01 per GB.

When should you choose DigitalOcean over a hyperscaler?

You should choose DigitalOcean if you are a digital-native enterprise or AI startup looking for a developer-friendly alternative that avoids the operational overhead and cost complexity of the big three. It can be ideal for teams that want an integrated agentic cloud architecture to build full-stack AI applications without managing hundreds of specialized services. DigitalOcean also provides basic technical support at no charge and a library of over 8,000 tutorials to help ensure an approachable management experience.

How do cloud egress costs compare across providers?

Hyperscalers like AWS typically charge between $0.05 and $0.09 per GB for data egress, which can vary based on volume and region. In contrast, DigitalOcean eliminates these fees by including generous bandwidth in its plans and charging a transparent rate of $0.01 per GB for any overages. This can make DigitalOcean a more predictable and appealing option for applications with high data transfer requirements.

Which cloud provider is best for startups?

DigitalOcean is the comprehensive AI inference cloud designed specifically for AI-native businesses and startups that need to scale projects on demand without breaking the bank. Its Gradient AI Inference Cloud allows developers to test, deploy, and iterate on AI agents with minimal infrastructure management while supporting both proprietary and open-source models. The platform’s focus on simplicity and predictable pricing helps companies focus on building products rather than managing complex cloud sprawl.

Is DigitalOcean suitable for enterprise workloads?

Yes, DigitalOcean supports production-level enterprise applications with a 99.99% uptime SLA for Droplets and Block Storage across 15 globally distributed data centers. For demanding AI and machine learning tasks, it offers high-performance Gradient AI GPU Droplets powered by NVIDIA H100 and H200 GPUs. The platform is also enabled for HIPAA requirements and SOC 2 compliant, providing the security and reliability standards required for modern enterprise environments.

Build and scale faster with the DigitalOcean Inference Cloud

DigitalOcean provides a streamlined alternative to hyperscale complexity, designed to give you everything you need to build, deploy, and scale—from general-purpose Droplets to high-performance AI infrastructure. DigitalOcean focuses on simplicity, predictable pricing, and the direct support your team needs to move from idea to production.

Just ask NoBid: when their high-traffic ad platform outgrew AWS, they migrated to DigitalOcean in just 90 days. The move resulted in a 30% reduction in cloud costs and gave their team direct access to DigitalOcean engineers to support them in serving hundreds of billions of monthly requests.

Here’s why digital-native businesses choose DigitalOcean:

-

Comprehensive infrastructure: Scalable Droplets, managed Kubernetes, and databases designed for production-level reliability.

-

AI-ready solutions: Gradient AI and high-performance GPU Droplets (NVIDIA H100/H200) for training and deploying AI agents at scale.

-

Transparent pricing: Predictable monthly costs with generous bandwidth and no opaque egress fees.

-

Enterprise-Grade standards: HIPAA-eligible and SOC 2 compliant infrastructure with a 99.99% uptime SLA.

Sign up today to scale your next project on a cloud that stays out of your way. For custom migrations or GPU allocations, contact our sales team to see how DigitalOcean can reduce your total cost of ownership.

Any references to third-party companies, trademarks, or logos in this document are for informational purposes only and do not imply any affiliation with, sponsorship by, or endorsement of those third parties.

About the author

Maddy Osman is a Senior Content Marketing Manager at DigitalOcean.

- Table of contents

Get started for free

Sign up and get $200 in credit for your first 60 days with DigitalOcean.*

*This promotional offer applies to new accounts only.